Since the inception of Power BI Custom Connectors, I’ve been drawn to coming up with new ones that could help my life and the lives of my customers. One of the most helpful custom connectors that I’ve created so far is the Power BI REST API Custom Connector (url).

However, in order for people to use it they had to go through a really complex set of steps where they had to register their own application, modify the custom connector until they were able to actually launch the connector in their Power BI Desktop.

A big chance occured not so long ago – a new Authentication kind has been added to the Power Query SDK called the AAD (Azure Active Directory) kind which is specific for resource that use the AAD. This is similar to how you might use the ADAL library when developing a solution in .NET. I’m no programmer / developr, so I have little to no experience working with ADAL or MSAL, but this implementation of the authentication kind goes beyond just a simple library, as it also provides an embedded first-party application created by Microsoft for anyone to use.

What does this mean? It means that it’s much easier to use the connector now as it doesn’t require anything else other than the connector file itself.

In short, with this new feature for the custom connector, all you need to do to connect to the Power BI REST API from within Power BI is to:

- Download the .mez file from the GitHub repo

- Save the file in your custom connectors folder ([Documents]\Power BI Desktop\Custom Connectors). If the folder doesn’t exist, create it and plac the file in there.

- In Power BI Desktop, select File > Options and settings > Options > Security. Under Data Extensions, select (Not Recommended) Allow any extension to load without validation or warning. Select OK, and then restart Power BI Desktop.

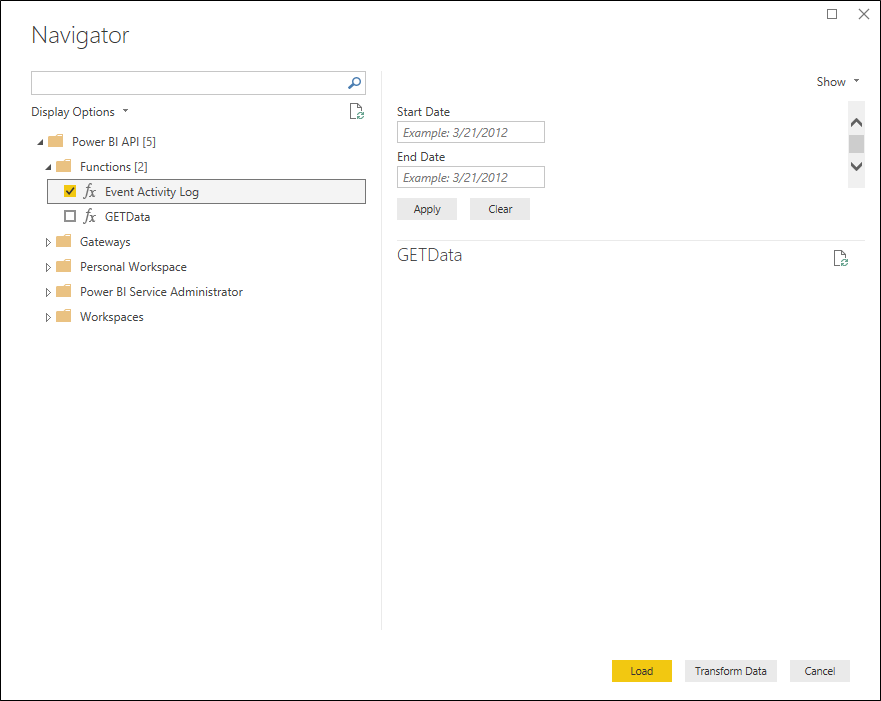

- Open Power BI Desktop and find the connector listed inside the Get Data window

Features found in the connector

With this connector you are able to connect to almost every single GET endpoint from the REST API. Most importantly, if you don’t find it in the long list of tables available, there’s a GETData function so you can insert any url of the Power BI REST API and query the data that you need.

One of the coolest features that I was able to introduce was a way to get the Event Activity Log data inside of Power BI through a function. Not only that, but the function itself can be used for Incremental Refresh, meaning that we could absolutely compile all of the event activity log data into a single dataset and analyze everything and anything with ease. You can read more about how to use that function from this article (url) that I wrote a few years ago.

How to schedule a refresh in the Power BI Service

Since this is a Power BI Custom Connector, it follows the same set of rules as other custom connectors. You can read Microsoft’s official documentation for the step by step on how to configure the scheduled refresh, using a gateway, of your dataset sourced from a data connector from here (url).

Caveats

I’ve tested this connector for the past few years in small (dozens of workspaces and users) to big environmnts (hundreds of workspaces and users), but I’ve never attempted to use this custom connector in a huge environment with thousands of workspaces and users under the same tenant. In those cases perhaps a more granular and programmatic approach might be necessary.

The primary reason for this is that the REST API is not necessarily fast in sending the data, but the connector has been purposefully built in a way that query folding is not enabled and there’s no schema defined for most tables in an effort to make this a connector that will simply always show you all the data that we get directly from the REST API in the easist to manage way. I’ve chosen usability over performance. In theory, this will impact tenants which have ‘larger than life’ workspaces and users.

If you’re getting serious about managing your Power BI tenant, then this is a must have tool for you. Give it a try and if you find any issues please report them on the GitHub repo!

Miguel Escobar Published February 21, 2021

Miguel Escobar Published February 21, 2021

This is awesome and thanks for your contribution. This is the most convenient way for me as admin to extract and analyze tenant data. I have a separate workflow to store the daily event activity log and will continue using it, but your connector allows me to quickly get the other metadata (report/dataset catalog for example) to do a hollistic tenant review.

glad it works for you!

Hi Miguel, does this connector work for the service refresh as well? I tried with enterprise gateway following the documentation below but could not find this as a connector option when adding new data source for the gateway.

https://docs.microsoft.com/en-us/power-bi/connect-data/service-gateway-custom-connectors

Sorry please disregard my earlier question. I am able to proceed by moving the connector file to a folder location that is accessible by the gateway service account. Still having an error now with “gateway cannot be reached”, but that’s probably something specific to my scenario. Thanks again!

Thank you very much for your contribution. This is very good and will help a lot in the administration of the Power BI Service. Congratulations on the idea and initiative to build the connector.

Hi Miguel — this sounds fantastic! I grabbed the .mez file, placed it in the Documents\Power BI Desktop\Custom Connectors folder, changed the security setting in PBI, but I still cannot get your connector to appear in the Get Data list. Any ideas?

Hey!

Not sure. Are you able to use other custom connectors? is the problem only with this one?

If the problem is for all connectors, then I’d suggest that you post your question on the official Power Query forum here:

https://docs.microsoft.com/en-us/answers/topics/power-query-m.html

This is actually the first one I’ve tried. I’ll have a look at that link you sent. Thanks!

Hi Miguel!

It would be very nice to add support for Dataflow (Get Dataflow Transactions), so we could track the refreshes status! I leave the documentation here: https://docs.microsoft.com/en-us/rest/api/power-bi/dataflows/getdataflowtransactions

Nice job!

Hey!

Thanks for the feedback. You can get this info using the GETData function within the connector.

You are also welcome to contribute on the GitHub repo 🙂 – that’s how most of the endpoints are appearing in there. I initially only had a few tables loaded.

Hi.

First of all, I would like to thank you for this. This is the easiest way to consume the API.

I’m facing only a small problem using the “DatasetDatasourcesAsAdmin”

“Error returned: OLE DB or ODBC error: [Expression.Error] The field ‘datasourceid’ of the record wasn’t found”

Could you help me with that?

Everything else is working well.

Thank you for this!

hey! would you mind please posting your full scenario with repro steps on the GitHub so we can better track this issue?

Here’s the link to it:

https://github.com/migueesc123/PowerBIRESTAPI/issues

Here’s also the full code for that one so you can take a look:

https://github.com/migueesc123/PowerBIRESTAPI/blob/b753bba38fb5f9e21a24bac91f76f485685d1d70/Power%20BI%20API/Power%20BI%20API/PBIAPI.pq#L626-L654

I believe this one was implemented by one of the contributors of the repo

Awesome stuff! I am having a problem though – I’m trying to pull multiple months of the “event activity log” with a filter right after “Invoked Function…”, where “Operation = GenerateEmbedToken.” This will run for a short time frame passed in the date function, for example if I pull Jan 1 to Feb 1st. But it slows to a crawl if I Select Jan 1st to today for example. Rather, it won’t even load. The only troubleshooting I’ve been able to identify is that my task manager on my laptop says memory is at 99.9% when I’m trying to pull those dates. Is this function very memory intensive? My machine has 16 gb ram, so I figured that would be enough.

Hey!

In most cases the issue is that the Power BI REST API needs to paginate for every single interval of the activity log for a given time period, so when you have a really active tenant and you try to pull multiple days of data then things can take a while to process and that’s the primary reason why you might want to enable incremental refresh for that data as suggested in the article

Hi Miguel! This is an awesome connector and just what I need. I’ve built a report in Power BI Desktop using the connector and am currently trying to configure it on our gateway. Using the Microsoft documentation on setting the custom connector up (the link in your article), I’ve dropped a copy of the latest connector file in a folder and the server where the gateway is installed. I’ve configured the folder location on the Connectors tab of the gateway app and provided the service account full permissions on the folder. I’ve also verified that I’ve used the correct service account set up with the gateway. Sadly I still can’t get the gateway to recognise the connector. Have you encountered this issue with any clients or have any other ideas why the gateway won’t pick the connector up? Thanks

Hey Jamie!

Glad you find the connector useful. That’s pretty odd – if you’re pointing the gateway to the folder where the connector is, it should pick it almost immediately without any issues.

I’ve had some cases with customers, but usually the best way to solve it is to get on a call with them and just go through my checklist to make sure that everything is set up correctly. Another viable option is to get in touch with the Power BI Support Team by open up a ticket.

It appears the solution was to keep the folder name for the custom connectors short and use a different service account instead of the default PBIEgwService account.

Glad that you were able to find a solution!

That’s a pretty odd scenario. Thanks for sharing!

I am implementing the activity pull using your connector. However, it’s taking way too long to pull the data when I am trying to pull the last 90 days. Do you know of any method that could help me to improve my performance?

I am using the connector with dynamic parameters for Start and End dates

Power query for StartDate

let

Source = Date.From((Date.AddDays(DateTime.LocalNow(),-90)))

in

Source

———————————

Power query for EndDate

let

Source = Date.From(DateTime.LocalNow()),

in

Source

———————————

Event Activity Function:

(#”StartDate” as date, #”EndDate” as date)=>

let

Source = PowerBIRESTAPI.Navigation(),

Functions = Source{[Key=”Functions”]}[Data],

EventActivityLog = Functions{[Key=”EventActivityLog”]}[Data],

#”Invoked FunctionEventActivityLog1″ = EventActivityLog(#date(Date.Year(StartDate), Date.Month(StartDate), Date.Day(StartDate)), #date(Date.Year(EndDate), Date.Month(EndDate), Date.Day(EndDate)))

in

#”Invoked FunctionEventActivityLog1″

———————————

Invoked Function:

let

Source = EventActivity(StartDate, EndDate),

#”Filtered Rows” = Table.SelectRows(Source, each ([UserId] “”)),

#”Filtered Rows1″ = Table.SelectRows(Source, each ([ActivityId] “”))

in

#”Filtered Rows1″

The issue I am having is it’s taking way too long to load, tried to load data for 6 hours then failed. Was trying to figure out if there is any option to improve the performance of data pull.

Hey!

The refresh speed relies heavily on how much data it’s trying to pull from your tenant, so it’s difficult to pinpoint if its because of your internet speed, throttling at the REST API, or you simply have a lot of data in your tenant.

I’d suggest that you use a proxy tool like Fiddler to monitor the calls and see how things are being requested to the REST API. Don’t forget that you could also implement an incremental refrehs solution so you don’t have to refresh the last 90 days all the time, but only the partitions that you see fit.

You can also modify the custom connector based on your specific needs. If you happen to find any bugs, please report those to the issues section of the GitHub repo using the link below:

https://github.com/migueesc123/PowerBIRESTAPI/issues/

Best!

Hi Miguel!

I’ve been using your connector for a bit now and it mostly works great and super easy to use, I’ve just been coming onto a bit of a problem though with the Audit Log endpoint. My scheduled refresh is failing everyday now saying that “The column ‘(Insert Column Here)’ of the table wasn’t found”. The column doesn’t matter as it will pick the next one if I remove it from the dataset. It’s erroring when I give a column a data type. The connector works fine when refreshed on desktop only in the service both with scheduled refresh and on demand. I’m lost for where to go from here, let me know if you need more information.

Thanks!

Hey!

This is one of those things that I wish I had the time and help from Microsoft to improve in the connector, but I don’t have any of those.

The way that the connector was built, and how the API works, is that it sends you the data that is available during a specific timeframe. This means that in some cases you might have a table with 15 fields for one day and for other days it might be a table with 40 fields because you had more distinct events during that period.

The refresh fails to you because you use a step that corresponds to a field that doesn’t exist in that given timeperiod.

The fix is for you to create your own table schema that has ALL the possible fields and implement some sort of table schema to your solution, so that regardless of a data refresh your query will ALWAYS have the fields that it needs and the connector is just feeding to that query as if it was “filling the blanks”. Unfortunately, I don’t have access to that table schema with all the possible outcomes from the event activity log endpoint, but if you ever find it please share it on the GitHub repo as it would help me improve the experience.

Best!

Oh that makes sense! I think I could create an empty table with columns that I want, then append the results of the Audit Log onto that table and do a Remove Other Columns. It works for me as I know what columns that I want, but still doesn’t work for all possible combinations like you were talking about.

that’s what I had in mind. That would be the best approach!

Hi – I just wanted to say thank you for this connector – really appreciate you publishing this.

Hi Miguel Great Tool.

we have a project of Power BI reports Embedded in Dynamics 365 portal (iframe) is there a way to track the usage adoption of does embedded reports?

Any tips or guidance will be great .

Thanks

It depends! It depends on what type of information you’re looking for and how you’re embedding such reports.

I’d suggest that you check out the documentation of the REST API to see what’s available and see which endpoint could be of interest to you. Then it also depends on your level of permissions to such objects.

In theory, you can use the connector to query almost all endpoints from the API.

Best!

Awesome. I am wondering if you can create a custom connector in Power Automate that can do the same please?

Hey,

Thanks for thinking about me for this request!

I’m not sure I’m the right person for it. I wouldn’t even know where to begin or what information to even show as I’m not too curious about the REST API that might be used by Power Automate and don’t really see it as a fun personal project (and I’m also fully booked with the the other ones that I’m doing).

Have you thought about making your idea public on the Power BI ideas site?

https://ideas.powerbi.com/ideas/

That would be the best way to pass your feedback on to the Power BI team and perhaps have the Power Automate create the connector that you’re looking for.

Best!

Miguel,

Thank you and others for creating and sharing the Power BI REST API connector. This is awesome!

2 issues I am having.

a) The GetData and GatRawData functions import as tables, not functions.

b) The Event Activity Log function

I would like to pass todays date as the end date and todays date -30 as the start date without managing parameters so that when I refresh it will get the last 30 days data.

Do you have any suggestions on how to approach passing dates into the function?

hey Chuck!

Thanks for trying out the connector. Please post all issues and feedback directly on the GitHub repo.

The a) seems really odd! not sure how that could be happening. PI don’t see it happening in my environment with the latest version of the connector. Please make sure to post some repro steps on the GitHub issue so me and the rest of the contributors can validate.

For b), incremental refresh could be a good option as long as you are able to satisfy the need for a full table schema that should always be met regardless of the output of the function. Other than that, you’d need to modify the function or create a new one to satisfy your specific needs which shouldn’t be too difficult.

Best!

Thank you very much for this.

I have an issue, i’m not able to open .sln file for adding some fonctions. I have Visual Studio 2017 SSDT.

Hey!

Unfortunately, I wouldn’t know where to even begin to troubleshoot this scenario. I’d encourage you to post the issue on the official GitHub repo of the SDK (link below):

https://github.com/microsoft/DataConnectors/issues

Hi Miguel. Very useful tool, thank you for this.

We have some embedded reports which clog up the user activity data, since they have the operation: “GenerateEmbedToken”. It makes the API call slow, since there is a lot of these rows. I am not interest in reporting on these, but instead only on operation: “ViewReport”.

Microsoft uses this example as how to filter these out in the API call:

GET https://api.powerbi.com/v1.0/myorg/admin/activityevents?startDateTime='2019-08-13T07:55:00.000Z'&endDateTime='2019-08-13T08:55:00.000Z‘&$filter=Activity eq ‘ViewReport’

Can I add this filter in the API call using your custom connector? I am not sure where i would state this. This would be my M code after using the ‘Event Activity Log function. I tried adding the filter after the dates, but without luck unfortunately.

let

Source = #”Event Activity Log”(#date(2022, 1, 1), #date(2022, 1, 1))

in

Source

Hey!

You’ll need to step into the code of the custom connector and modify it so you can add other query parameters to it (like the filter field for example).

The way that I created the function is that it basically gets everything without any sort of filters at all (besides the dates).

Below is the link to the exact lines from the connector where you’ll see what I implemented:

https://github.com/migueesc123/PowerBIRESTAPI/blob/6405c320938ef7705a46f3e22edb0d5a2f4ebdbe/Power%20BI%20API/Power%20BI%20API/PBIAPI.pq#L137-L178

You can read more about the license of the custom connector (the project itself) from GitHub as well, but you’re welcome to fork the repo and modify it to your specific needs.

Hi Miguel.

Thank you for taking the time to get back to me.

I have taken a look at the code and tried to modify it to fit my need. By looking at the highlighted code in your link, I would assume that the only change I need to make is this (picture, green highlight). However, it still returns all activities.

Did I miss another spot where I should specify this?

Have a good one.

I’m actually on a Mac nowadays without a way to test things out, but usually what I used to do was do a lot of trial and error to make sure that everything was running as intended.

That might be the line of the code that you need to modify, but the best way is to always test things out and see if you’re getting the result that you’re expecting. I also recommend that you use something like Fiddler to check the calls being emitted by the SDK or Power BI Desktop and see if everything is running as desired.

Hello Miguel,

Thanks a milion for creating and sharing this connector! It is awesome and makes it super-simple to work with PBI API.

Just a one rookie question – when I open Dataflow Transactions, it returns me always only 10 last records for any dataflow despite I can see more history in the web front-end. I tried checking the web but without any luck.

Would you have some idea how to get over this / what might be the cause? (nature of API / settings of my PBI Desktop / admin settings of my company for API…)

Thanks again!

Mike

Hey Mike!

Thanks for the kind words.

While the initial release of this connector is something that I created on my own, a lot of pieces were later added by other contributors to the project. This particular piece is one of those components that was added by other contributors who might know more about the intricacies of it.

Perhaps the endpoint only provides N number of rows? maybe there’s a missing implementation of Pagination at the connector level? I’m not that familiar with this specific endpoint or the implementation that was done by the contributors of the project, so I wouldn’t be able to exactly tell you what might be missing.

I highly encourage you to post your scenario and any bugs that you may have found to the issues section of the GitHub repo so that all of the contributors to the project can take a look at it and provide their feedback. Below is the link to that section:

https://github.com/migueesc123/PowerBIRESTAPI/issues

Best!

Muchisimas gracias por esta contribución.

Llevaba tiempo buscando algo así. Hasta ahora tenia que resolverlo usando powershell, pero esto permite una integración mucho mas simple.

Hello,

Great work with the connector. I have a question for GetData Functions. It’s asking for a URL. Which URL do I need to provide for this?

Thank you,

hey!

The function comes with some documentation at the function level. You can go into the Power Query Editor and see it from there. It’s effectively the endpoint that you’re trying to connect.

Thanks a lot for this awesome connector. I have been struggling to get this data and set this up in an automated way and I came across this connector and it really simplifies things.

I am trying to setup incremental refresh for the event log, but not sure how exactly to do it. As we are using REST APIs there is no query folding here so I don’t understand how incremental refresh would work here. Would appreciate some pointers to help set this up.

Hey!

These other posts might help you with that:

https://www.thepoweruser.com/2018/05/08/first-thoughts-on-power-bi-incremental-refresh/

https://www.thepoweruser.com/2020/01/18/power-bi-event-activity-log-api-easy-way-to-get-it/

Hi Miguel,

Many thanks for the excellent Connector. I’m hoping you can assist please…

I am getting an error in the last row of the Dataflow Tables (namely Tables 7,8,9 & 10)…

“Expression.Error: The field ‘name’ of the record wasn’t found.

Details:

objectId=67e764c8-0311-4fd0-bcc0-72c7993b324c

configuredBy=fbrowne@bordgais.ie

users=[List]”

…which prohibits me from loading into the model.

In Power Query Editor I can see all the records in these tables but with the final row being ‘error’ across all columns. The ‘Remove error’ and ‘Replace error’ functions won’t resolve this issue.

Can you advise?

Many thanks,

Jonny

Hey Jonny!

Would you mind posting this on the issues section of the GitHub repo? I didn’t add the code for that call, so I’m not the best to answer the question about what might be wrong with it.

We also accept any contribution to the GitHub repo in the event that you’d like to make any changes to the code of the connector.

Best!

YOU ARE A LEGEND!!

Hi Miguel,

I am getting the below error when trying to refresh the dataset using this API. Any suggestions?

Data source error: Unable to refresh the model (id=15154) because it references an unsupported data source.

Cluster URI: WABI-AUSTRALIA-SOUTHEAST-B-PRIMARY-redirect.analysis.windows.net

Activity ID: XXXXXXXXXXX

Request ID: XXXXXXXXXXXX

Time: 2022-07-21 22:47:01Z

hey! for questions related to the actual connector, please post them on the issues section of the GitHub repro.

Best!

Thk u very much! This connector is very helpful to me. As I’ve encountered problems in calling Power BI Rest API by Postman, I don’t know which parameters I should use. With this connector, I don’t need to call by Postman

I’m on the next step of configuring a schedule refresh. Hopefully it works!

Hey, are there possibly any plans to have this awesome connector certified, so its available in dataflows?

hey! The best thing that you can do is reach out to the Power BI team to request it 🙂 only they can certify it

It looks like you can submit it, @Miguel.

https://learn.microsoft.com/en-us/power-query/connectorcertification

Unfortunately, no. As the documentation states on that article, only the “data source owner” can take that action. I’m not the owner of such data source, so can’t really do that 🙁

You can still get in touch with the Power BI team so you can request them to do it.

Well I am not seeing API in Custom Connectors even if I copied it in new created folder. Am I doing something wrong? What kind of ADmin rights do I need to have actually in Power BI for this?